AI in the Enterprise: Risk and Hope in 2026

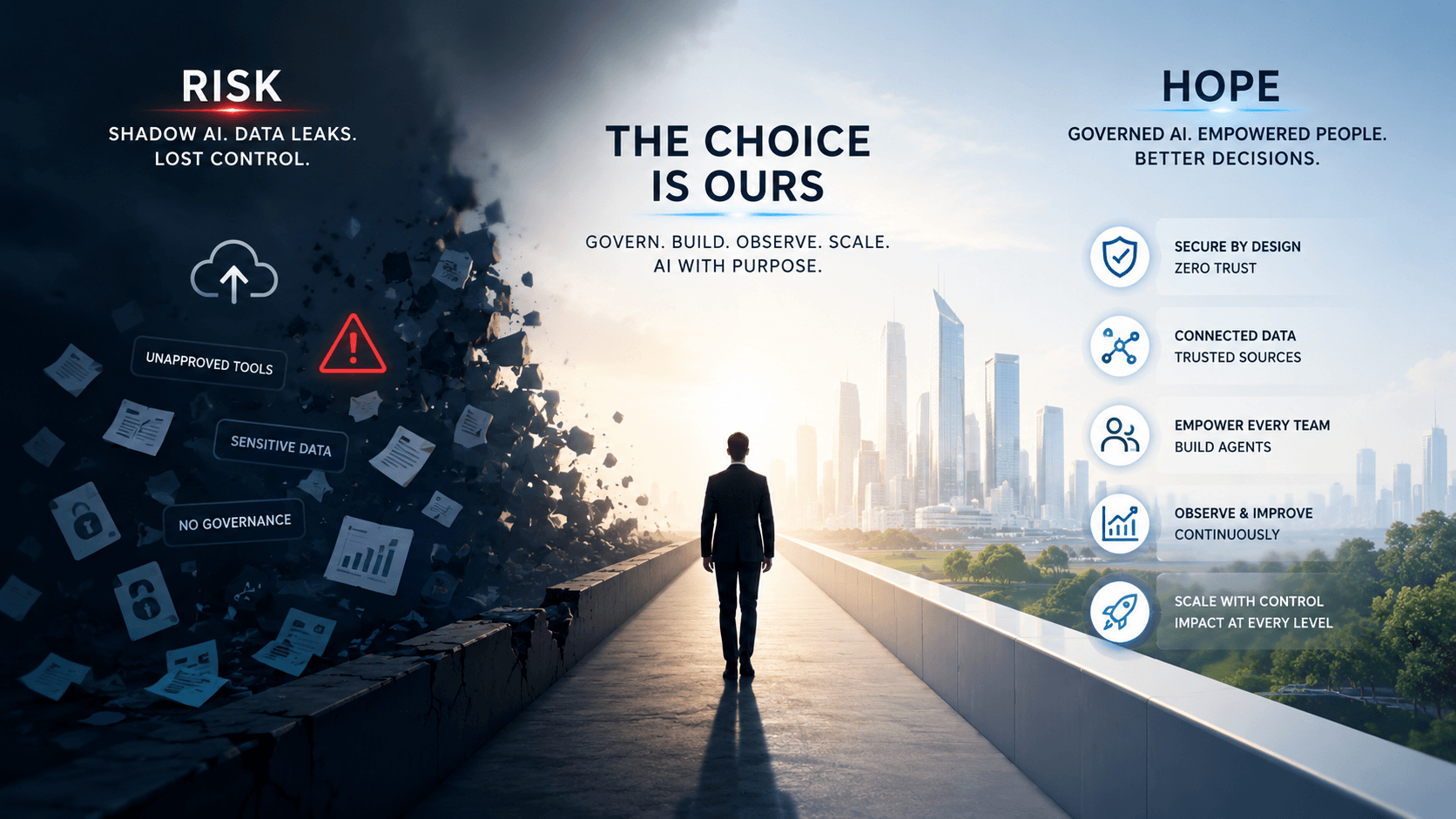

AI in 2026 is no longer optional: companies must govern it, secure it, and turn it into a shared capability, not just another licensed tool.

AI in the Enterprise: Between the Empire of Risk and the Hope of a New Operational Republic

“I have a bad feeling about this.”

That Star Wars line captures quite well what many leaders should feel when they look at how their organizations are “adopting” artificial intelligence.

Because let’s be honest: many companies believe they have already crossed the threshold simply because they bought licenses for ChatGPT Workspace, Gemini Enterprise, Microsoft Copilot, or some other corporate solution. They feel modern. They feel like they are riding the wave. They feel, finally, as if they are inside the future.

But the truth is more uncomfortable: buying licenses is not adopting AI. It is merely turning on a lightsaber without knowing whether the person holding it is a Jedi, an apprentice, or someone who is about to accidentally cut off the organization’s hand.

Today, anyone inside a company may be using AI with permission, without permission, or somewhere in a gray area. They may be uploading financial reports to a public LLM, pasting commercial strategies into a presentation tool, using Gamma to create a “beautiful” meeting deck, summarizing contracts, copying databases, sharing prices, margins, expenses, credentials, strategic plans, or the entire DNA of the business into platforms that no one in technology, security, or compliance is actually observing.

And the worst part is that, many times, that person is not doing it out of negligence. They are doing it because they want to work better. Because they have a meeting. Because they are tired. Because they need to deliver. Because the organization demands speed but has not given them a safe path.

That is the real problem: AI has already entered the company, even if the company has not yet decided how to govern it.

Microsoft and LinkedIn reported in their 2024 Work Trend Index that 75% of knowledge workers were already using AI at work, and that usage had nearly doubled in six months. They also noted that many employees were bringing their own AI tools to work because their organizations were not moving fast enough. Later reports on “shadow AI” have shown a similar concern: employees using unapproved tools, personal accounts, and external platforms to process sensitive information. For example, TELUS Digital reported in 2025 that 57% of enterprise employees using GenAI admitted to entering sensitive or high-risk information into public assistants, and that nearly 68% accessed those assistants through personal accounts.

So the question is no longer whether AI will be used. It is already being used.

The question is whether the company will turn it into a secure, governed, cross-functional capability, or whether it will allow it to become a network of digital smuggling.

False Adoption: Licenses Are Not Transformation

There is a dangerous confusion in the market: believing that enabling a tool is the same as transforming an organization.

That is like thinking the Rebellion won because it acquired some X-Wings. No. It won because it had purpose, coordination, intelligence, training, strategy, and a network capable of executing under pressure.

A company does not adopt AI because it pays for licenses. It adopts AI when it changes the way it thinks, operates, decides, and improves. Real adoption happens when people stop using AI only to write faster and start using it to redesign their work.

AI should not be seen as a machine for making prettier emails or more elegant presentations. That is the surface level. Useful, yes, but insufficient.

The real leap happens when each area starts asking:

What repetitive task do we do every month that steals hours from us?

What process depends on one person who always has to “know where everything is”?

What analysis do we complete late because the information lives in fragments?

What report is still built manually even though the data already exists?

What decision is made by intuition because nobody has time to connect the right sources?

That is where real adoption begins. Not in the beautiful prompt, but in the redesign of work.

The Fear of Losing Jobs and the Opportunity to Elevate Thinking

One of the most common fears is that AI will come to take jobs. And yes, it would be naïve to deny that there will be automation, displacement, and deep changes in operational roles. But staying only in that fear is like staring at the Death Star and forgetting that there is also a Rebel Alliance.

AI can destroy value if it is implemented as an excuse to cut without redesigning. But it can also multiply value if it is used to free the human mind from tasks that should never have taken up so much space.

Many jobs today are full of friction: copying, pasting, consolidating, reviewing, chasing information, preparing reports, searching for documents, reconciling data, answering the same thing a hundred times, converting tacit knowledge into operational deliverables. These are not worthless tasks, but they often consume the energy that should be dedicated to thinking better.

The hope is that AI forces us to level up. To move from process operators to system designers. From tired executors to more attentive strategists. From people who “do what has to be done” to people who ask: how do we tighten the screw even further?

Properly adopted AI does not replace human ambition. It focuses it.

Technology Should Not Be the Bottleneck

Here is another common mistake: believing that the technology department must build every agent in the organization.

That does not scale.

If every area needs to automate tasks, create assistants, connect data, generate workflows, summarize information, analyze operations, or answer internal questions, technology cannot become a centralized factory of small agents for everyone. That model dies from saturation.

The role of technology must change.

Technology should not be the only builder. It should be the architect of the secure environment.

Its responsibility should be to:

1. Deliver reliable data sources.

Not any lost Excel file. Not any duplicated folder. Clean, updated, traceable, and authorized sources.

2. Govern access.

Who can see what. Which agent can query which system. What data can leave. What actions require approval.

3. Build the secure perimeter.

Enterprise AI must operate inside a private, auditable environment aligned with security policy.

4. Enable integrations.

APIs, connectors, MCPs, internal services, databases, documentation, and operating systems connected in a controlled way.

5. Observe and audit.

It is not enough for the agent to work. We need to know what it did, why it did it, with which data, under which identity, and with what result.

In other words: technology should not manufacture all the droids. It should build the factory, the rules, the protocols, and the limits so that the droids can work without putting the ship at risk.

Gemini Enterprise as a Possible “Mecca” of Enterprise AI

In this context, Gemini Enterprise and, especially, Gemini Enterprise Agent Platform emerge as a highly relevant proposal. Not because one tool alone can solve transformation, but because Google is pointing toward something companies urgently need: moving from isolated tools to a governed platform for agents.

Google introduced Gemini Enterprise Agent Platform in April 2026 as a platform to build, scale, govern, and optimize enterprise agents. The company describes it as the evolution of Vertex AI, integrating capabilities for model selection, model and agent development, plus new functions for integration, DevOps, orchestration, and security.

This point matters: the conversation is no longer only “which model do we use?” The conversation is: how do we build, govern, observe, and scale agents that act inside the company?

Google Cloud’s official documentation positions Agent Platform as a platform for creating enterprise agents connected to internal data, with components for building, scaling, governance, and optimization. It includes tools such as Agent Development Kit, Agent Studio, RAG Engine, Vector Search, Agent Gateway, semantic governance policies, content safety, IAM, evaluation, observability, traces, and online monitoring.

That connects directly with the real need of companies: not having one more chatbot, but having an environment where agents can operate with reliable data, clear permissions, and supervision.

Bain & Company summarized the shift clearly when analyzing Google Cloud Next 2026: enterprise AI is moving beyond agent creation toward agent governance. It also highlighted that identity, context, and security are becoming core infrastructure, not accessories.

That is the critical point. In the agentic era, the risk is not only that someone asks an inappropriate question. The risk is that an agent can act: query systems, modify information, send emails, generate documents, make preliminary decisions, or trigger workflows. That is why every agent needs identity, permissions, traceability, and limits.

Build, Govern, Observe, Scale: The New Map of Adoption

Real AI adoption should be organized around four verbs.

1. Build: Build from the Workplace Outward

The future is not one where only technology builds. The future is one where every area can create its own tools within a secure framework.

Finance can create agents to explain expense variations.

Sales can create assistants to prepare proposals using approved data.

Operations can automate recurring reports.

Human Resources can answer internal questions without exposing sensitive data.

Legal can create preliminary review workflows.

Leadership can gain cross-functional visibility without asking for twenty manual reports.

But “build” does not mean chaos. It means empowerment with boundaries. Low-code tools, prebuilt agents, templates, connectors, and reliable data. The platform must allow the business to build, but within an architecture designed by technology, security, and data teams.

As Yoda would say: “Do. Or do not. There is no try.”

You cannot “half-adopt” AI. Either you build an organizational capability, or you accumulate scattered tools.

2. Govern: Govern Before Scaling

Governance is not about slowing things down. Governance is what allows AI to move forward without turning the company into a minefield.

This includes data policies, information classification, access controls, auditing, agent identity, external tool review, approved agent catalogs, prompt and output monitoring, and clear rules about what information can be used in each context.

Google, for example, mentions capabilities such as Agent Identity, Agent Registry, and Agent Gateway so that agents have traceable identities and operate under enterprise guardrails. This type of approach is essential because an agent should not be a black box acting with permissions inherited from just any user.

Governance must answer concrete questions:

What agent exists?

Who created it?

What data does it use?

What actions can it perform?

What permissions does it have?

Who approved it?

What logs does it leave?

How do we shut it down if something goes wrong?

Without those answers, there is no adoption. There is faith. And in enterprise security, faith is not a policy.

3. Observe: Observe to Learn and Correct

An agent should not be measured only by whether it “answers nicely.” It should be measured by quality, accuracy, cost, latency, usefulness, security, and traceability.

Observability is what allows us to move from experiment to operation. It lets us see what the agent did, which tools it called, what data it used, where it failed, when it hallucinated, how much it cost, and what impact it had.

Gemini Enterprise Agent Platform’s documentation includes capabilities such as evaluation, observability, traces, topology, offline evaluation, simulation, online monitoring, and prompt optimization. This points to a basic truth: enterprise agents are not toys. They are living systems that must be tested, audited, and improved.

An agent without observability is like flying the Millennium Falcon with your eyes closed. You might arrive. You might also cross an asteroid field without realizing it.

4. Scale: Scale Without Losing Control

Scaling AI is not giving everyone access and hoping for the best. Scaling means turning successful use cases into repeatable, secure, and measurable capabilities.

This requires patterns: agent templates, reusable components, approved connectors, reliable data sources, well-designed MCPs, agent owners, usage metrics, continuous review, and internal training.

Google describes Agent Runtime, sessions, and Memory Bank as pieces that support long-running agents with state and persistent context. This type of capability opens the door to agents that do not merely answer questions, but accompany entire processes.

But the more autonomy, the more governance. The more context, the more responsibility. The more integration, the greater the need for Zero Trust.

Zero Trust: Do Not Trust Even Enthusiasm

Enterprise AI needs a Zero Trust mindset from the beginning. Not because employees are enemies, but because enthusiasm without controls can leak critical information.

Zero Trust applied to AI means nothing should have access by default. Not users, not agents, not connectors, not external tools. Every access must be justified, limited, recorded, and reviewed.

The company must protect its intimacy. Its prices, expenses, contracts, strategies, code, credentials, internal processes, margins, accumulated knowledge, and decisions cannot be scattered across external platforms because someone needed a more aesthetic presentation.

The paradox is that prohibition does not work. If the official tool is bad, slow, or useless, people will use another one. If the safe path does not solve real work, the informal path will appear. The best defense against shadow AI is not only blocking; it is offering a safe alternative that is better than the risky one.

The 1% Change: The Real Revolution

The final idea in this vision may be the most important: if an organization of 200 people changes by 1%, everything changes.

Because 1% does not sound heroic. It does not look like a revolution. It does not feel like an epic scene with John Williams music. But in practice, that 1% can mean:

One less hour per person per week.

One automated monthly report.

One decision made with better data.

One repetitive process eliminated.

One agent answering internal questions.

One analyst who stops copying and starts interpreting.

One area that shares knowledge instead of hiding it in folders.

One team that measures better.

One manager who sees earlier.

One company that learns faster.

In 200 people, small changes become structural. Adoption does not begin with a grand speech. It begins with a hundred micro-transformations that, together, change the culture.

AI should not be a luxury reserved for the most technical people. It should be a distributed capability. Every person should be able to look at their work and ask: which part of this can become a tool? Which part can be automated? Which part can be connected? Which part can be elevated?

That is the true awakening of the organizational Force.

Conclusion: Leaders Versus Losers

The difference between leaders and losers will not be who bought a license first. It will be who understood first that AI is not software, but a new way of operating.

The losers will have scattered tools, leaked data, employees improvising, saturated technology teams, security chasing the problem from behind, and leaders celebrating beautiful presentations without knowing what information was used to create them.

The leaders will build secure platforms, governed data, observable agents, trained teams, technology as an enabler, the business as a builder, and security as architecture, not as an obstacle.

AI will not do everything. That is another dangerous fantasy. But it can open spaces. Spaces to think better. To work with less friction. To connect areas. To reduce absurd tasks. To make visible what was previously fragmented. To help the company stop operating as silos and start behaving like an intelligent system.

In Star Wars, the Force does not belong to one person. It flows, connects, reveals, and transforms. In the enterprise, AI should aspire to something similar: not to be the toy of a few, but a shared capability that elevates the entire organization.

But for that, we must choose well.

It is not enough to say we are riding the wave.

It is not enough to have Gemini, ChatGPT, or any other tool.

It is not enough to congratulate the person who made the prettiest presentation.

The real question is: do we know where our information is, who uses it, which agents process it, what decisions it enables, and how we protect the company’s DNA while unlocking the potential of our people?

That is real adoption.

And perhaps, amid so much fear, that is the hope: that AI does not come to replace our ability to think, but to force us to think better.